Handling large files throughout Python can be a daunting task, especially when dealing with memory limitations and gratification issues. However, Python provides a selection of tools in addition to techniques to proficiently manage and shape large files. On this page, we’ll explore numerous strategies and best practices for handling huge files in Python, ensuring that the code runs effortlessly and efficiently.

a single. Understanding Large Data

Large files can easily refer to any data file that is too large to be quickly processed in recollection. This might incorporate text files, CSVs, logs, images, or perhaps binary data. When working with significant files, it’s necessary to understand the ramifications of file size on performance, storage usage, and info handling.

Why Is It Demanding?

Memory Limitations: Reloading a large file entirely into recollection can lead to be able to crashes or gradual performance as a result of limited RAM.

Performance Problems: Reading and creating large files may be time-consuming. Customization these operations is definitely crucial.

Data Integrity: Ensuring the sincerity of data when reading or publishing to files will be critical, particularly in software that require reliability.

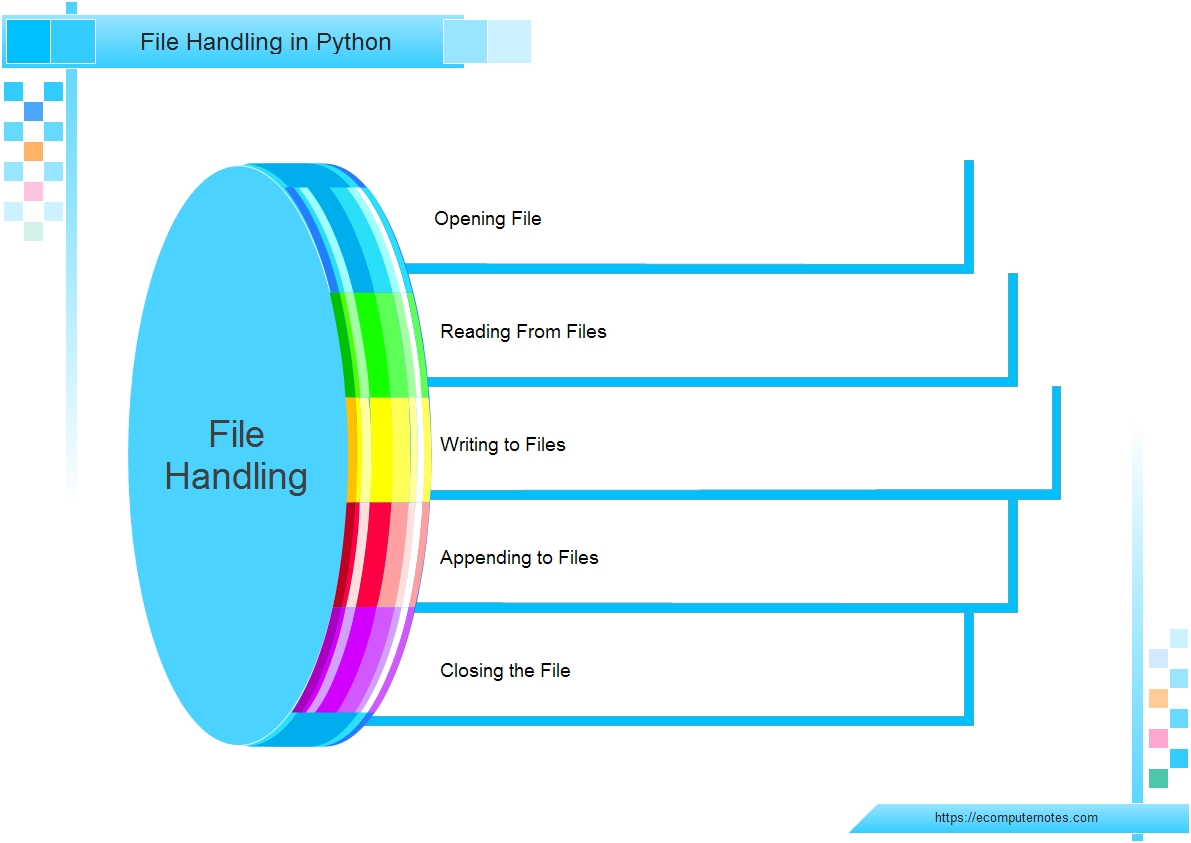

2. Basic Document Operations in Python

Before diving directly into handling large data files, let’s revisit simple file operations throughout Python:

python

Copy code

# Beginning a file

using open(‘example. txt’, ‘r’) as file:

content material = file. read() # Read entire content

# Composing to some file

along with open(‘output. txt’, ‘w’) as file:

data file. write(“Hello, World! “) # Write data arranging

Using the with statement will be recommended as that helps to ensure that files are properly closed after their suite completes, even when an different is raised.

three or more. Efficient Tips for Handling Large Data files

several. 1. Reading Files in Chunks

1 of the many effective ways to deal with large files would be to read them in smaller chunks. This method minimizes memory utilization and allows a person to process information sequentially.

Example: Reading through a File Collection by Line

Rather than loading the complete file into memory space, read it series by line:

python

Copy code

along with open(‘large_file. txt’, ‘r’) as file:

with regard to line in record:

process(line) # Substitute with your control function

Example: Reading Fixed Size Portions

You can likewise read a specific range of bytes each time, which can end up being more efficient intended for binary files:

python

Copy code

chunk_size = 1024 # 1KB

with open(‘large_file. bin’, ‘rb’) because file:

while Correct:

chunk = document. read(chunk_size)

if not chunk:

break

process(chunk) # Replace using your processing performance

3. 2. Applying fileinput Component

Typically the fileinput module can easily be helpful when you want in order to iterate over lines from multiple input streams. This is definitely particularly useful any time combining files.

python

Copy code

importance fileinput

for collection in fileinput. input(files=(‘file1. txt’, ‘file2. txt’)):

process(line) # Substitute together with your processing purpose

3. 3. Memory-Mapped Data

For quite large files, take into account using memory-mapped data files. The mmap module allows you to be able to map folders straight into memory, enabling you to accessibility it as if it were a good array.

python

Copy code

import mmap

with open(‘large_file. bin’, ‘r+b’) as n:

mmapped_file = mmap. mmap(f. fileno(), 0) # Map the entire file

# Read data from your memory-mapped file

information = mmapped_file[: 100] # Read first hundred bytes

mmapped_file. close()

Memory-mapped files are particularly useful for arbitrary access patterns in large files.

three or more. 4. Using see this site for Large Information Files

For organized data like CSV or Excel files, the pandas collection offers efficient procedures for handling significant datasets. The read_csv function supports chunking as well.

Example of this: Reading Large CSV Files in Portions

python

Copy signal

import pandas while pd

chunk_size = 10000 # Range of rows for each chunk

for chunk in pd. read_csv(‘large_file. csv’, chunksize=chunk_size):

process(chunk) # Replace together with your processing perform

Using pandas furthermore provides a wealth of functionalities for data manipulation plus analysis.

3. 5. Generators for Large Files

Generators usually are a powerful approach to handle large data files as they deliver one item from a time and is iterated over without loading the entire file into memory space.

Example: Creating a new Generator Function

python

Copy signal

outl read_large_file(file_path):

with open(file_path, ‘r’) as document:

for line within file:

yield collection. strip() # Produce each line

for line in read_large_file(‘large_file. txt’):

process(line) # Replace with your current processing function

4. Writing Large Files Effectively

4. a single. Writing in Chunks

Much like reading, if writing large data files, consider writing info in chunks in order to minimize memory consumption:

python

Copy program code

with open(‘output_file. txt’, ‘w’) as record:

for chunk throughout data_chunks: # Presume data_chunks is actually a listing of data

data file. write(chunk)

4. two. Using csv Component for CSV Files

The csv component provides a basic solution to write big CSV files proficiently:

python

Copy code

import csv

using open(‘output_file. csv’, ‘w’, newline=”) as csvfile:

writer = csv. writer(csvfile)

for strip in data: # Assume data will be a listing of rows

article writer. writerow(row)

4. 3 or more. Appending to Data files

In order to add data to an existing file, open it up in append setting:

python

Copy computer code

with open(‘output_file. txt’, ‘a’) as file:

file. write(new_data) # Replace with your own new data

your five. Bottom line

Handling huge files in Python requires careful consideration of memory use and performance. By employing techniques such as reading files within chunks, using memory-mapped files, and leveraging libraries like pandas, you are able to efficiently control large datasets with no overwhelming your system’s resources. Whether you’re processing text files, CSVs, or binary data, the tactics outlined in this article will support you handle major files effectively, guaranteeing that your apps remain performant plus responsive.

6. Additional Reading

Python Records on File Dealing with

Pandas Documentation

Python mmap Module

Fileinput Documentation

By developing these techniques into the workflow, you may make one of the most regarding Python’s capabilities and efficiently handle also the largest of files. Happy code!